A rabbit hole comparing engineering and nature

...and finding lots of answers

I’ve never had a formal education in engineering. Yet I harbor a deep love for it.

Part of my learning process — the inducement of fascination with engineering — has been around paying attention to nature. We’re asked to submit to this new and wonderful era of engineering being at the heart of solution-finding, and I’ve found myself constantly returning to nature to make sense of it all. You’d be surprised at what one learns. How bees organize themselves lends itself perfectly to how server capacity must be allocated. How bats hunt despite being blind maps onto how algorithms must go wide before they tune their parameters for a narrow subset.

On and on.

For those interested, this is the product currently of my endless rabbit hole — a love for nature transmuting to a love for engineering solutions. I presented this recently to a group of non-technical colleagues, and the reaction was interesting enough to warrant writing it down.

What follows is not exhaustive, and I am not an authority. But the central thesis is simple: the best engineering solutions are ones that nature arrived at first. Engineers just formalized what evolution had already perfected.

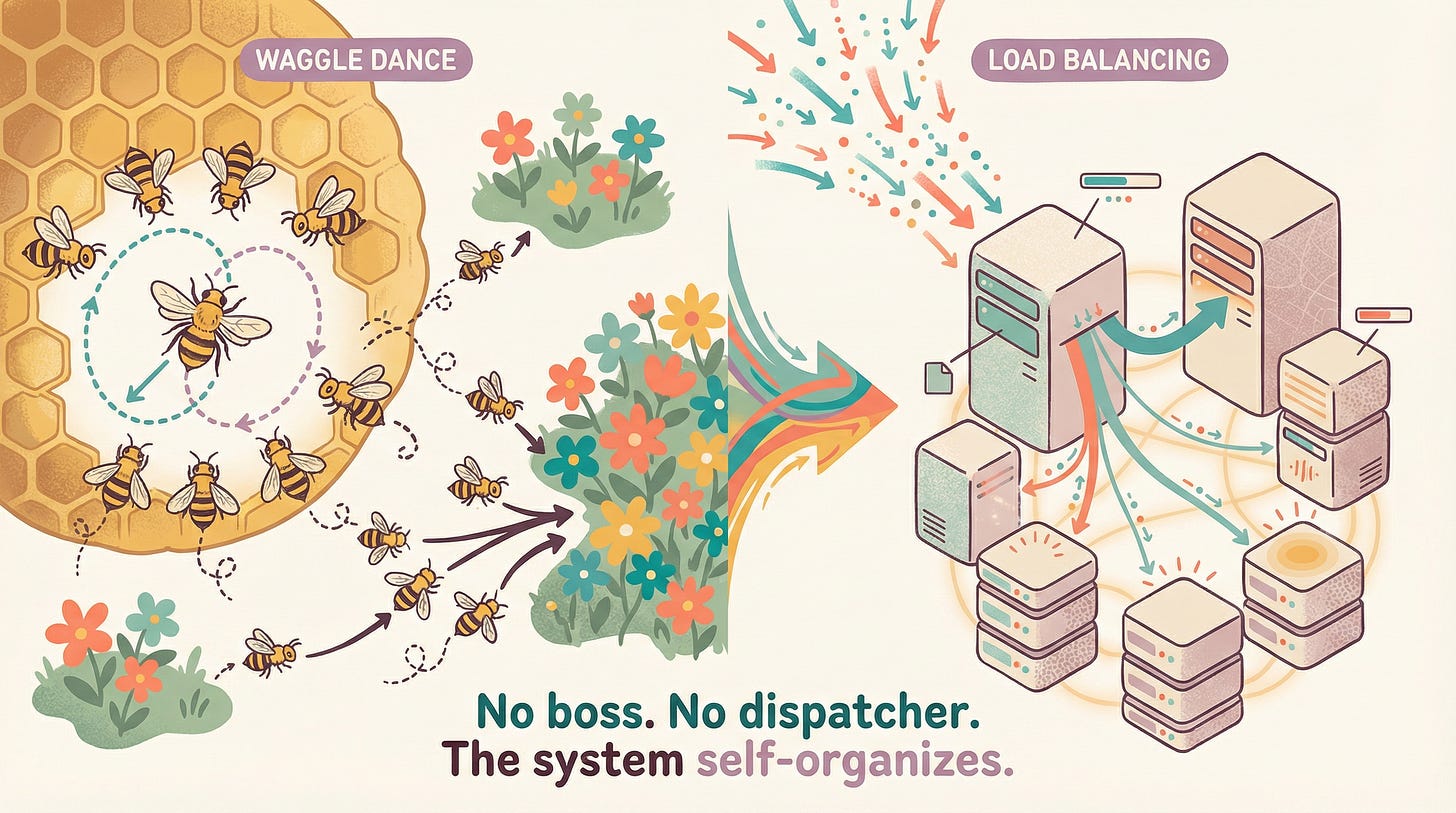

The honeybee colony is 60,000 individuals allocating themselves across dozens of flower patches — perfectly matching supply to demand — with no central controller.

Here’s how. A forager finds a good patch and returns to the hive. She performs a waggle dance — a figure-8 movement that encodes distance and direction. The richer the patch, the more vigorous the dance. Other bees watch. Each independently decides whether to follow based on the dance’s energy. No committee. No dispatcher. Just signal and response.

Sunil Nakrani and Craig Tovey saw this and built the Honeybee Algorithm for web server load balancing. Servers advertise their capacity through ‘dances’ (status signals). Requests route to servers the way bees route to flowers — decentralized, self-organizing, no single point of failure.

I find this one particularly instructive because it demolishes a bias I used to carry: that complex systems need complex coordination. They don’t. They need good signals and agents with the freedom to respond to them. Every auto-scaling architecture in the cloud today runs on a version of this insight — and a bee colony figured it out long before AWS existed.

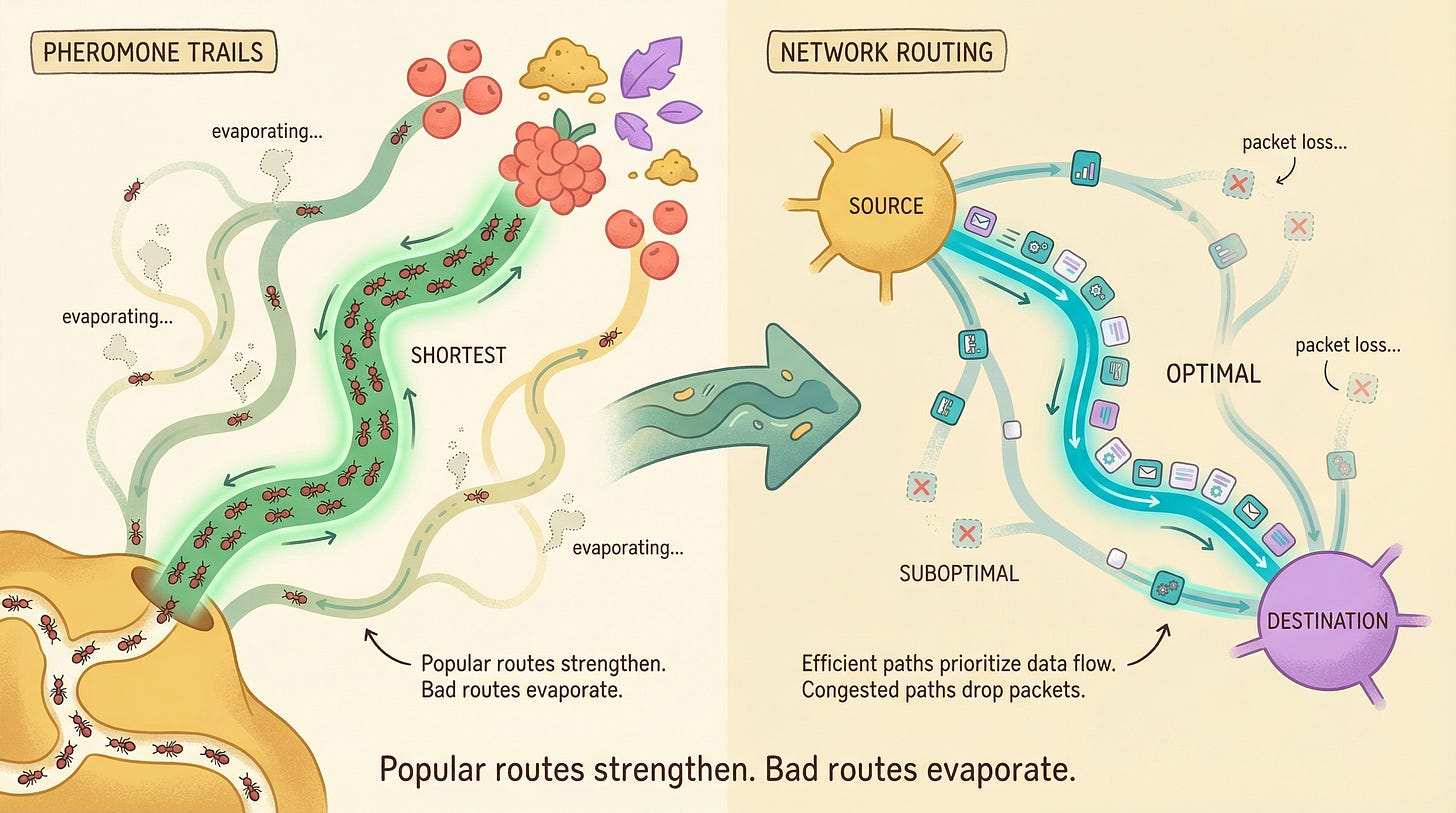

Ants searching for food leave pheromone trails. An ant that finds a short path returns quickly, laying fresh pheromone. That trail stays strong — many ants reinforce it. Longer paths take more time; the pheromone evaporates before reinforcement. Over time, the colony converges on the shortest route. No ant knows the full map.

This is remarkable if you sit with it. The system has a built-in forgetting mechanism. Bad information doesn’t just get deprioritized — it literally disappears. Time itself is the filter.

Marco Dorigo formalized this as Ant Colony Optimization in 1992. Virtual ‘ants’ explore network paths, leaving digital pheromones on good routes. Dutch Railways used it to schedule 7,000+ daily trains with 99% punctuality. It also powers internet packet routing and logistics optimization.

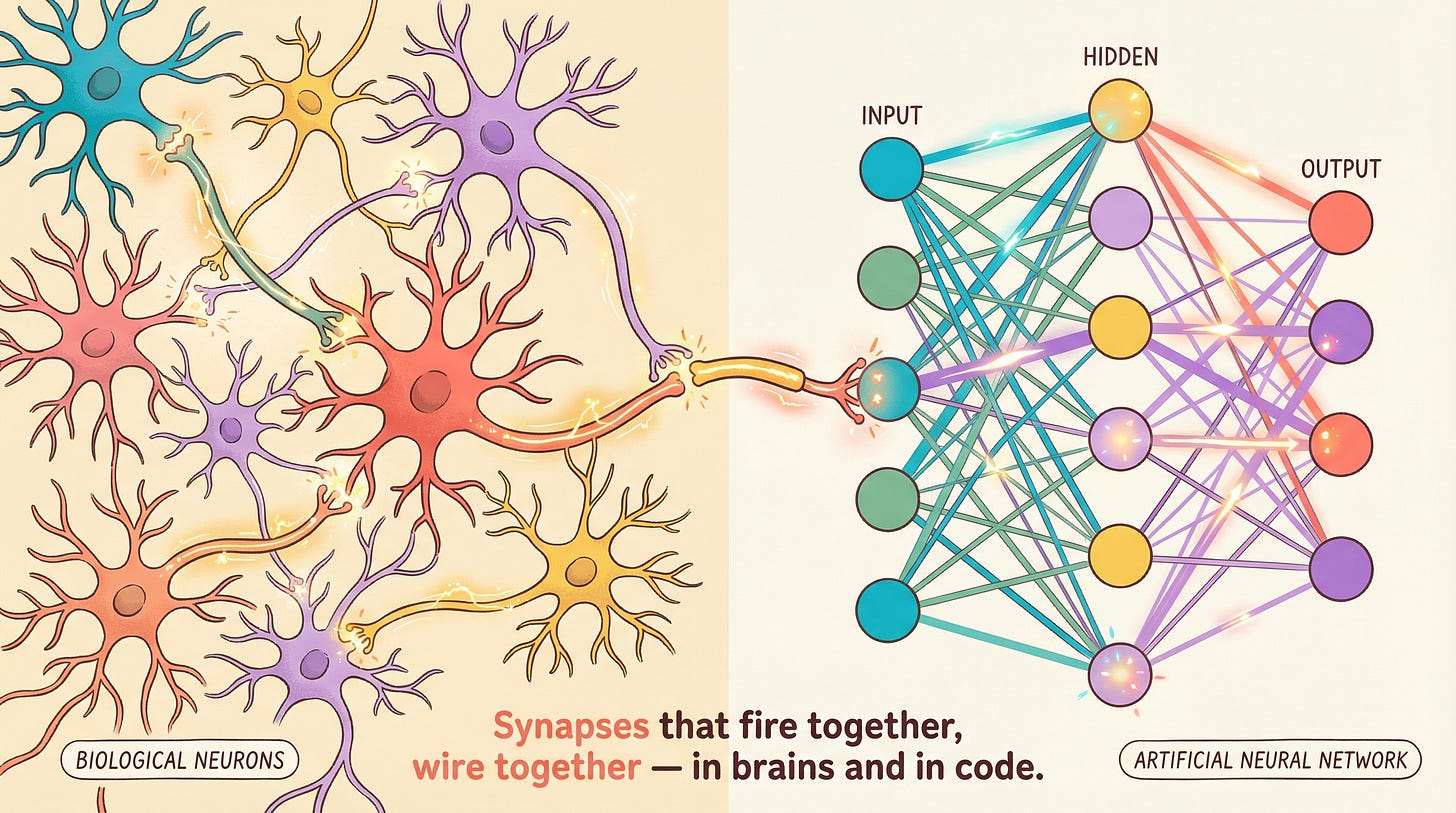

The human brain contains roughly 86 billion neurons connected by 100 trillion synapses. Learning occurs through Hebbian plasticity — connections that are used frequently grow stronger; unused ones weaken and prune. Information flows through layers: sensory input, pattern recognition, abstraction, decision.

McCulloch and Pitts modeled the first artificial neuron in 1943. Modern neural networks — including the transformer architectures powering tools like Claude — use the same structure: weighted connections between nodes arranged in layers. Backpropagation is the engineering equivalent of Hebbian learning: strengthening connections that contribute to correct outputs.

This is the one most people know. I include it because the lineage matters — it started with someone watching a brain and asking, “what if we could build that?”

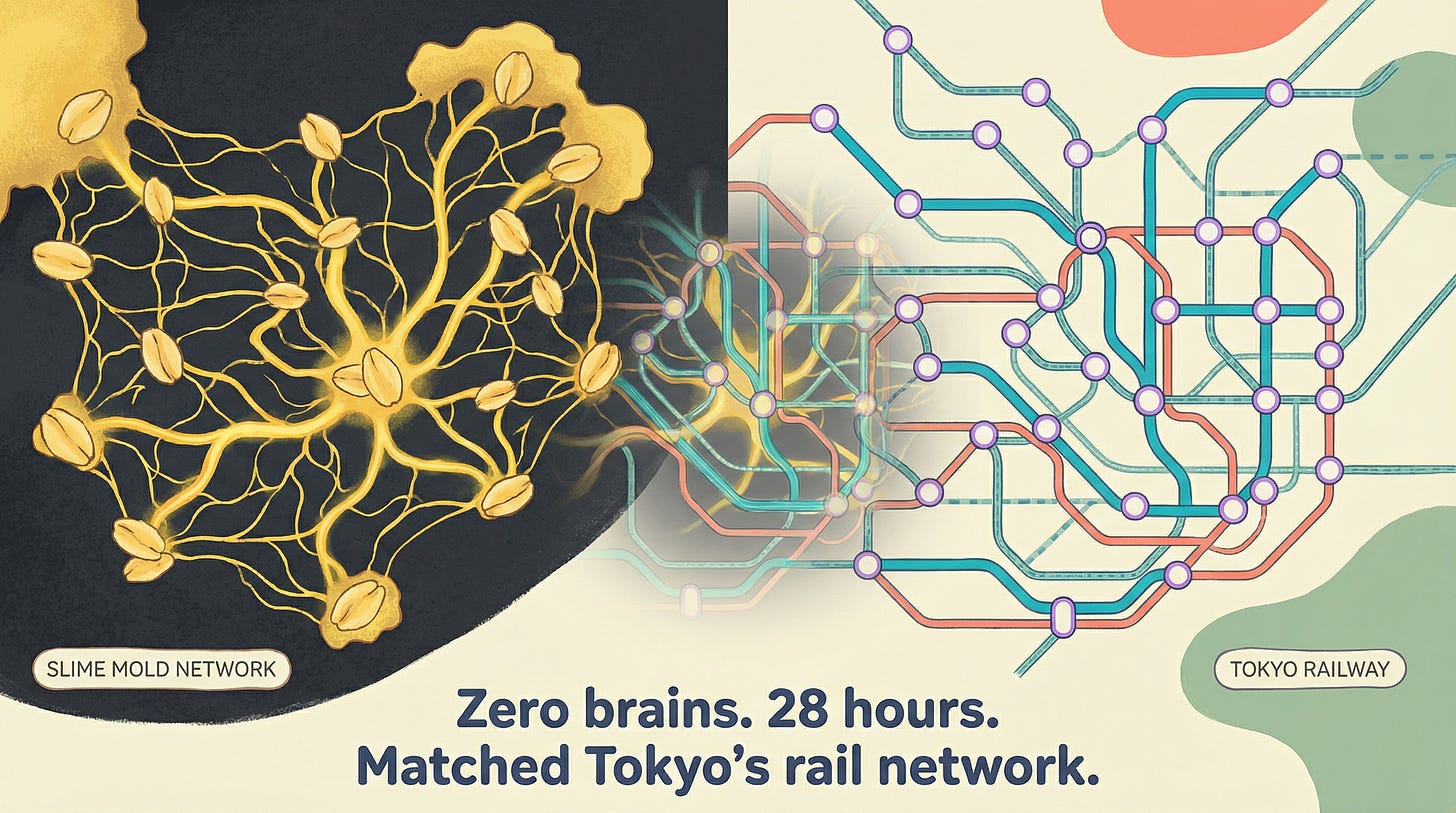

This one is my favorite.

Physarum polycephalum is a single-celled slime mold. No brain, no nervous system, no central intelligence. Atsushi Tero at Hokkaido University placed oat flakes on a wet surface at positions matching cities around Tokyo. In 28 hours, the slime mold grew a network connecting them.

It closely matched Tokyo’s actual railway system.

In some areas, the slime mold’s network was more efficient — with strategic cross-links that improved resilience to disruptions. A brainless organism outperformed human infrastructure planners in less than two days.

I keep returning to this example because of what it implies about intelligence. We tend to equate intelligence with centralization — a brain, a planner, a CEO. But the slime mold has none of these. It just follows local chemical gradients, and the optimal network emerges. The lesson isn’t “slime molds are smart.” The lesson is that optimization doesn’t require a central optimizer. The substrate can be enough.

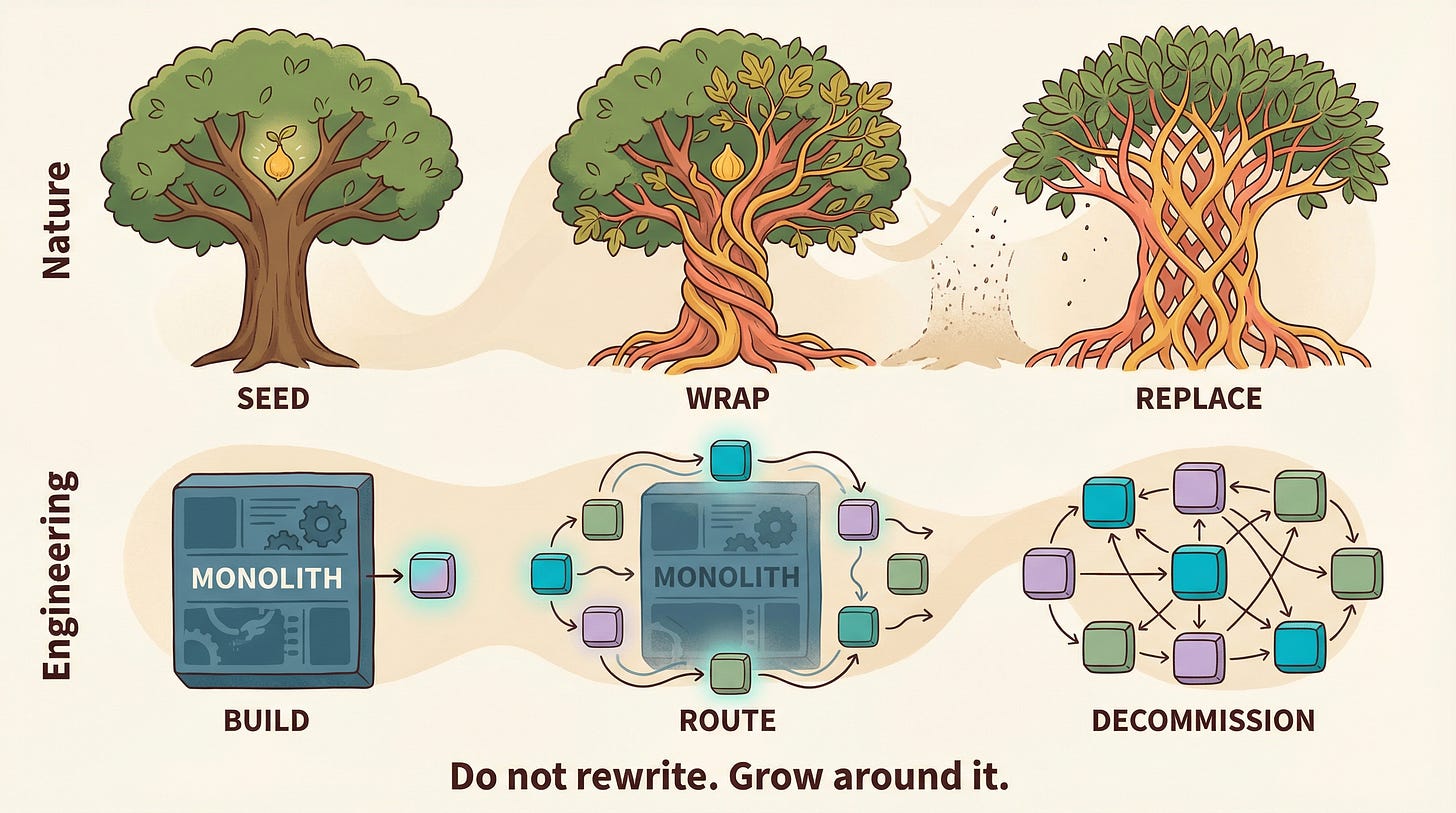

Strangler figs begin life as epiphytes — seeds deposited by birds in a host tree’s canopy. The fig grows roots downward, wrapping around the host trunk, while its canopy grows upward, competing for light. Over decades, the fig’s lattice thickens. The host tree, deprived of light and nutrients, dies and decomposes — leaving the fig standing as a hollow, self-supporting structure.

Martin Fowler named the Strangler Fig pattern after seeing these trees in Queensland rainforests. The engineering translation: instead of a risky ‘big bang’ rewrite of legacy software, you grow new microservices around the old monolith. Route traffic to new services piece by piece. Eventually the monolith handles nothing and can be safely decommissioned. AWS recommends this for every major cloud migration.

Seed. Wrap. Replace.

I love this one because it applies well beyond software. It’s how we approach institutional change at Taleemabad — you don’t tear down the existing system and hope to build a better one in the void. You grow the new thing around the old thing, let it take over gradually, and one day the old structure is gone and nobody noticed the transition. That’s how change sticks.

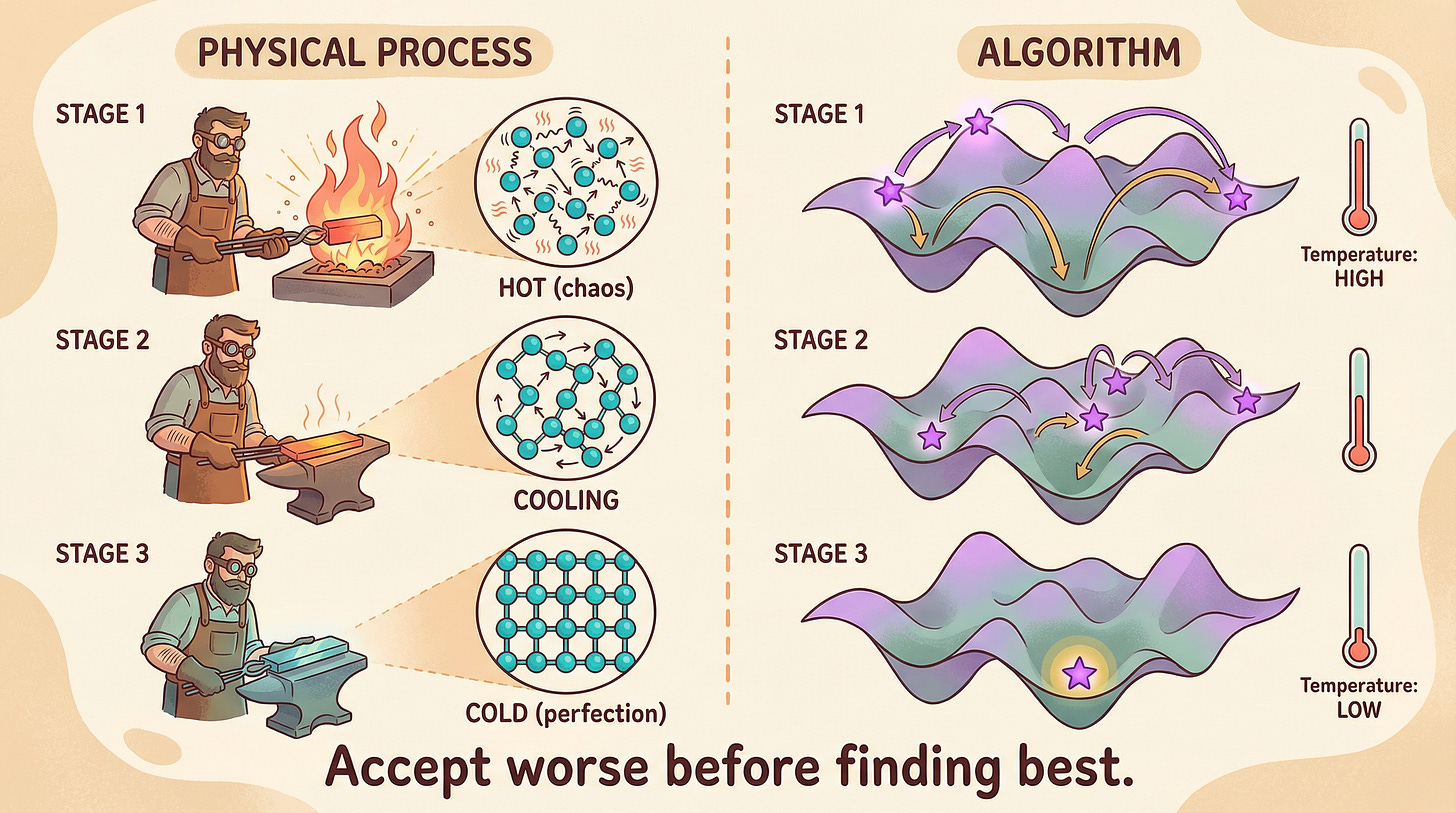

In metallurgy, annealing involves heating metal to high temperature — atoms jump around chaotically, breaking out of defective structures — then cooling it very slowly. As temperature drops, atoms gradually find their lowest-energy configuration: a perfect crystal lattice.

The key insight: you must accept disorder first to reach order. Cooling too fast freezes in defects. Cooling slowly produces perfection.

Kirkpatrick, Gelatt, and Vecchi mapped this to optimization in 1983. At high ‘temperature,’ the algorithm accepts worse solutions — big random jumps that prevent getting stuck in local optima. As temperature decreases, it becomes increasingly selective, making smaller, more precise moves. Eventually it settles into the global optimum.

There is something philosophically satisfying about this. The algorithm that insists on always moving toward “better” gets stuck on mediocre hills. The one willing to accept temporary regression — to get worse on purpose — finds the mountain. I think about this often in the context of organizational strategy. The willingness to accept short-term chaos in exchange for long-term order is a kind of discipline most organizations lack, because they mistake turbulence for failure.

These are the ones I think about the most. The rest are in this slide deck. Do you have any to add? Do you find this topic interesting? If so, reach out and I’d love to share this rabbit hole and have a conversation around it!

Nice bit of re-inspiration on a day when I am sleep deprived and been struggling for some time to implement some synchronisation algorithms.

I disagree with the "this new and wonderful era of engineering" though. What's so wonderful about it?